Music has been part any human culture across the globe since more than 50,000 years, it has been influenced by social and technical factors like most of the arts, and it also had a role in the evolution of the mankind (Wallin et al., 2000). For thousand of years music did not exist per se, but it was the result of the continuous interaction between a musical instrument and a player. The instrument is the fundamental joining link to turn musical ideas of composers and performers into sound. Instrument designer and crafter, which are usually players as well, have a key role in building and providing these tools. Prehistorical flutes, made out of carved bones and about 35,000 years old, had been found in several places across central Europe and archeologists consider these the earliest artifacts made from durable materials that can be called primitive musical instruments. The Divje Babe Flute found in Slovenia is the oldest instrument, and it hails from the Paleolithic, back to 43,000 years ago. Prior to that it is believed that generic tools, such as stones, or clapping of hands clap were used to rhythms as an early form of music. Musical instruments have dramatically evolved into a wide variety of forms across time and cultures (May, 1983) and although there are reliable methods of determining the exact chronology of musical instruments within the human history, it is possible to classify these accurately following the Hornbostel–Sachs classification, which is divided into four macro categories: idiophones, membranophones, chordophones and aerophones (Von Hornbostel & Sachs, 1914). In the early twentieth century, with the advent and spread of electroacoustic, electric, and later electronic or digital musical instrument the Hornbostel–Sachs classification became incomplete since it included only those that today we call acoustic instruments. Therefore in 1940 Sachs added the electrophones category that includes:

- “51. Instruments having electric action (e.g. pipe organ with electrically controlled solenoid air valves);

- 52. Instruments having electrical amplification, such as the Neo-Bechstein piano of 1931, which had 18 microphones built into it;

- 53. Radioelectric instruments: instruments in which sound is produced by electrical means.”

– Curt Sachs (Sachs, 1940)

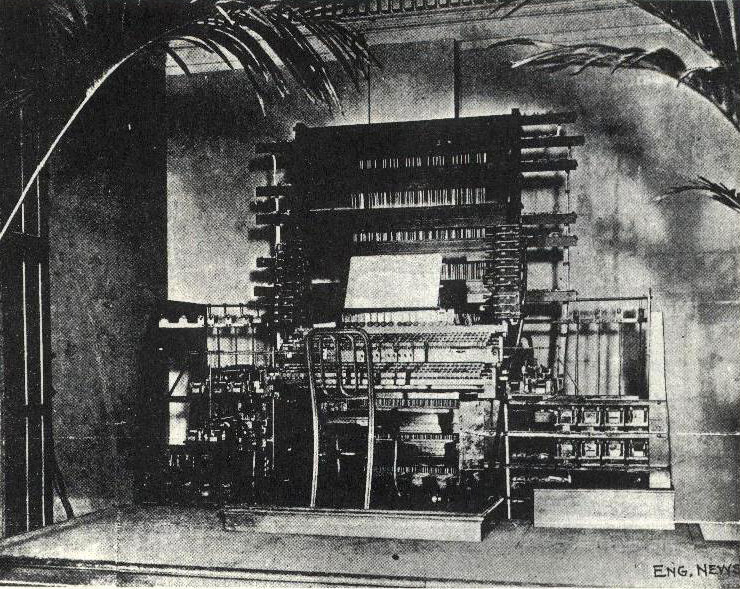

Today, with the view over more than a century of electrophones and in order to maintain the original classification consistency, ethnomusicologists such as Ellingson and Kartomi propose that only the sub-category 53 should remain in there, while the others should be placed respectively within and aerophones and chordophones. The Telharmonium is the first non-acoustic instrument in the history that presents both a sound generation mechanism and musical interface. It was a 200 tons electric organ developed by Thaddeus Cahill starting from 1897, that will inspire 30 years later the Hammond organs. It generates musical sound electromechanically in form of electric signal by additive synthesis using tonewheels, which is reproduced by primitive loudspeakers. Tonewheels were originally invented for radio communication purposes, and later adopted in electromechanical organs. Application and hacking of technologies originally designed for different purposes would be a common paradigm in the history of electric musical instrument until their maturity in the 1970’s, when specific techniques started to be widely developed and investigated. Radio broadcasting technologies found large application in the twentieth century early sound synthesis and processing machines. Over centuries instrument design has hence turned from a craftsmanship to an exact science, encompassing sound generation aesthetic as well as control aspects, and for modern electronic instruments it involves a variety of disciplines such as math, physics, engineering, computer science, psychology and music composition (Bernardini et al., 2007).

Milestones

Introductions, innovations and trends that represent milestones in the evolution towards modern DMI in chronological order.

1876 – Elisha Gray realizes the Musical Telegraph, the first sound synthesis generated electrically that was transmitted over a telephone line since based on communication technology. Gray generated and controlled sound using self-vibrating electromagnetic circuits, which represent a prime form of oscillators.

1877 – Thomas Edison invents the phonograph, the first device that could record and reproduce sounds. Even though it was not directly related to musical instruments and it was completely mechanical, it finally allowed sounds to travel across time and space. Sound recording techniques, such as tape and then sampler, will be later gain a great importance in DMIs. It is considered the ancestor of gramophone and turntable, and in 1922 Darius Milhaud started to explore phonograph the speed change to obtain vocal transformation, that could represent an early attempt of sound manipulation or processing.

1906 – Lee DeForest introduces the triode audion, the first amplifying vacuum tube. Designed for radio communication purposes, it will find great application in electric sound signals amplifiers and in particular in electronic oscillators, which are the basic building blocks in traditional sound synthesizer.

1913 – Luigi Russolo builds the Intonarumori, a set of mechanical devices generating noise-like sounds, recalling those of the industrial revolution. The importance is not in the acoustic devices themselves, but in the adoption for the first time of noise as material for musical composition, paving the way to the concept that any sound can be music, started to be more widely applied in the 1940’s by Pierre Schaeffer and Musique Concrète artists. Thus later the research in electrical generated sound would have an unlimited sound palette, noisy or non-natural sound generation devices would be instruments as well.

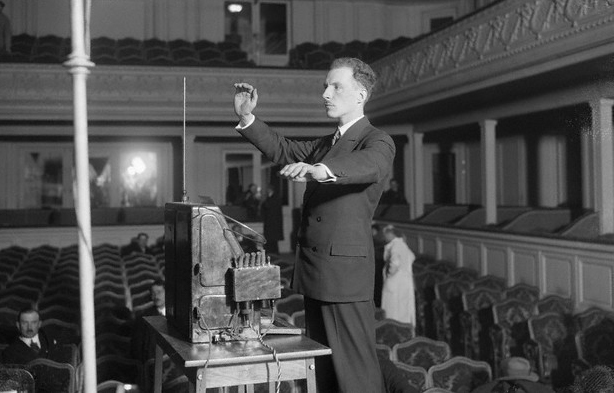

1920 – Lev Sergeyevich Termen presents the Theremin, the first touch-less and commercially available electronic instrument, in which two independent antennas measure the distance of performer’s hands to control pitch and amplitude of the sound. With this introduction composers started to write music for electronic instruments. Performing with it was not an easy task because of the complete lack of physical feedback and the continuity of the parameters.

1928 – Fritz Pfleumer invents the first magnetic tape sound recorder that was inspired by the 1898 Valdemar Poulsen magnetic wire recorder. In 1935 tape recorder become commercially available starting with the German Magnetophon, providing a better quality, lower cost, higher capacity, and more portable media for sound recording and playback. The great innovation was the possibility to easy edit the contents offline by cutting and pasting the tape. It gave rise to the Champerlin in 1949, and then to the Mellotron in 1963, the first sample based synthesizer. It was the essential tool for the Musique Concrète artists. In the 1930’s “electronic music” was defined for the first time as “electronically produced sounds recorded on tape and arranged by the composer to form a musical composition” (Dictionary.com), highlighting the central role of the tape recorder.

1928 – Homer Dudley at Bell Labs conduces the first vocoder experiments, which was patented and introduced in 1939 under the name of Voder (Voice Operating Demonstrator). It was a complex machine consisting of manually operated oscillators, noise generators a filter bank that skilled operators were using to produce recognizable speech. It was firstly used for voice encoding and later in the 1960s it started to be used for musical purposes, using the sound of a synthesizer as the input of the filter bank.

1929 – Laurens Hammond establishes the company bearing his name that produces electronic organs, which included also early reverberation units. In 1939 he introduced Novachord, the first commercially manufactured music synthesizer;

1937 – John Cage writes “The Future of Music: CREDO” (Cage, 1937), in which he foresee the future direction of electronic music and instruments. He predicted “I believe that the use of noise to make music will continue and increase until we reach a music produced through the use of electrical instruments which will make available for musical purposes any and all sounds that can be heard. Photoelectric, film and mechanical mediums for the synthetic production of music will be explored.”; “Wherever we are, what we hear is mostly noise. When we ignore it, it disturbs us. When we listen to it, we find it fascinating.”; “The present methods of writing music […] will be inadequate for the composer, who will be faced with the entire field of sound.”.

1937 – Harald Bode develops the Waarbo Formant Organ, the earliest polyphonic synthesizer, which provided also for the first time formant filters and dynamic envelop controller.

1937 – Evgeny Murzin creates the ANS synthesizer, a photoelectronic musical instrument that used the cinematography graphical sound recording method in the opposite way, generating a sound from a drawn spectrogram.

1948 – Hugh Le Caine builds the Electronic Sackbut, the first voltage-controlled synthesizer, a control method that later will become a standard.

1951 – Raymond Scott invents the first remarkable and fully analog electronic step sequencer, that contained 16 independent oscillators and tone circuits. Mechanical and electromechanical sequencer-like systems were already present since the late nineteenth century.

1953 – Iannis Xenakis introduces the stochastic music, a method to compose music using computer program based on probability system.

1956 – Lejaren Hiller and Leonard Isaacson complete Iliac Suite, using for the first time computer assisted algorithmic composition.

1956 – Raymond Scott creates the Clavivox, a synthesizer sub assembled by Rober Moog. Voltage controlled amplifiers, oscillators and filters became the basic building blocks of most of the analog synthesizers.

1957 – Max Mathews writes MUSIC, the first program for generating digital audio waveforms by direct synthesis in digital computer (Mathews & Guttman, 1959). It will give the rise to MUSIC-N series that in 1985 Barry Vercoe reimplements into Csound, a software still widely used today. The fast development of computers in the following decades will initially allow real-time synthesis, and thereafter support the growing complexity of audio synthesis and processing routines;

1957 – RCA designers Herbert Belar and Harry Olson build the “Mark II Music Synthesizer”, installed at the Columbia-Princeton Music Centre. It had the size of a room, it was composed by an array of analog synthesis components, and it had a four note variable polyphony. To change the timbre it was required to modify the modules interconnection and it generated sound only by programming through a punched paper tape.

1959 – Iannis Xenakis introduces the concept and a primitive form of granular synthesis in his composition for string orchestra and tape Analogique A-B, using analog tone generators and tape splicing. In 1975 Curtis Roads will implement the first granular synthesizer using MUSIC V. Real-time will achieved only in 1986 by Barry Truax. Different synthesis techniques based on grains, elementary sound units, will later gain larger reputation and application (Schwarz, 2006), such as Diemo Schwarz corpus based concatenative synthesis (Schwarz, 2000) and audio mosaicing.

1964 – Robert Moog displays the Moog modular analog synthesizer at the Audio Engineering Society convention, which has a much smaller size than its predecessors and a more intuitive use. He introduced the logarithmic 1-volt-per-octave pitch control that was adopted as a standard in the industry allowing cooperation between modular synthesizers from different manufacturers. The work of Robert Moog in the 1960’s was oriented also in making these devices portable and accessible to musicians and not only to engineers.

1967 – John Chowning discovers the FM synthesis algorithm at Stanford University (Chowning, 1973). It generates rich and complex timbres with a low numbers of oscillators that, in the digital domain, minimizes the numbers of required operations per sample.

1969 – Lejaren Hiller and Pierre Ruiz introduce the first physical modeling synthesis technique based on a finite difference model of a vibrating string to generate plucked and struck string tones (Hiller and Ruiz, 1971).

1970 – Robert Moog realizes the Minimoog, that integrates the modules of the earlier versions in a single device, including a keyboard with pitch bend and modulation wheel, that later will become a standard. The integration resulted in lower synthesis flexibility, but it provided a miniaturized and portable size, also thanks to solid-state components. It then gained popularity in live performance and in pop music.

1976 – Polyphony starts to be featured in several integrated analog synthesizers, especially from Yamaha, but they were still complex and bulky devices. In 1977 Sequential Circuits introduced the Prophet-5, a more practical and low-cost polyphonic analog synthesizer, using for the first time a microprocessor for control purposes, and providing also a digital memory to save and recall for all settings.

1978 – Peter Vogel and Kim Ryrie design the Fairlight CMI, the first groundbreaking polyphonic digital sampler, which opened the door to the sample-based sound synthesis.

1978 – Soundstream builds the Digital Editing System that is considered the first Digital Audio Workstation (DAW), based on the computer technology available at that time and custom audio cards with both analog and digital I/O.

1979 – Claude Cadoz, Annie Luciani, and Jean-Loup Florens develop CORDIS-ANIMA, a physical description language for musical instruments based on networks of masses and springs (Cadoz, Luciani, and Florens, 1993)

1980 – A group DMI manufacturers and musicians meets to standardize the instruments communication protocol for control instructions, establishing the Musical Instrument Digital Interface (MIDI). The standard specification was published in 1983. This key introduction boosted the development of sound generation units without musical interface, and musical interfaces generating only MIDI output. It enabled user to personalize their instruments control strategy, interconnecting arbitrary interfaces, hardware or software sequencers, and synthesizers. It also allows multiple instruments to be controlled from a single interface, making stage setups more portable. The MIDI standard specification was published in 1983.

1983 – Yamaha launches the DX-7, the first stand-alone digital synthesizer, based on the exclusively licensed Chowing’s FM synthesis.

1984 – Michel Waisvisz builds The Hands, the first experimental musical gestural interface based on the conversion of analog sensor data into MIDI control signals. This introduction had set a trend still in vogue today and it introduced the issue of mapping between performer’s gesture and instrumental control.

1984 – Roland introduces the MKB-1000 and the MKB-300, the world’s first MIDI controller keyboards.

1986 – Miller Puckette develops at IRCAM a non-graphical program to control the 4X synthesizer (Favreau et al., 1986) (Puckette, 1986). In 1988 he develops the graphical version called The Patcher (Puckette, 1988), which will be later called Max after Max Mathew, and then commercialized by Cycling’74. It has then evolved into Max/MSP, a modular software that includes also Digital Signal Processing (DSP) functionalities, that allows dataflow programming by graphical interconnection of routines that exist in form of shared libraries. It is widely used by researchers and software designers, but also by performers, artists and composers because it does not require coding expertise.

1988 – Korg releases the M1, the first music workstation with onboard MIDI sequencer and a rich palette of sound. It is the top selling DMI of all time with 250,000 units sold.

1991 – Jean-Marie Adrien introduces the modal methods for physical modeling synthesis, which relies on the decomposition of the dynamics of a system into modes, each of which oscillates at a given natural frequency (Adrien, 1991), later implemented in the Modalys/MOSAIC environment by Joseph Derek Morrison (Morrison and Adrien, 1993).

1992 – Julius Orion Smith III presents a simple and efficient delay-line structures to model wave propagation in objects such as strings and acoustic tubes (Smith, 1992).

1993 – Seinberg releases Cubase Audio, a DAW running on the Atari Falcon 030 providing 8 tracks recording and playback with DSP built-in effects using only native hardware.

1994 – Yamaha presents the VL-1, the first commercial physical modeling synthesizer, developed in cooperation with Stanford University and based on the Julius Orion Smith III digital waveguide synthesis algorithm.

1996 – Miller Puckette present Pure Data (Puckette, 1996), a software similar to Max/MSP in scope and design, released open-source one year later.

1997 – Matt Wright and Adrian Freed present the Open Sound Control (OSC) protocol (Wright & Freed, 1997). More consistent with modern technologies, it provides several improvements over MIDI, such as higher resolution and flexible symbolic path. The support for network-based transport mechanisms allowed the use of existing infrastructure such as local networks or the internet to exchange control messages. This enabled network based musical performances, in which performer’s interface and sound generation unit could be in disparate parts of the globe.

1996 – Steinberg introduces the Virtual Studio Technology (VST) in the 32 audio DSP tracks Cubase VST, the related specification and Software Development Kit (SDK). The VST is a standard cross platform software interface to integrate, or “plug-in”, soft synthesizers, samplers, drum machines and effects into audio editors, hard disk recording systems and DAW. This introduction considerably promoted the development of novel software synthesizer and effects, as well as the software emulation and enhancement of vintage devices.

2003 – Perry Cook and Ge Wang release ChucK, which is a concurrent and strongly timed audio programming language. It is used for synthesis, performance, composition, and it is very popular among live coding artists.

2004 – JazzMutant releases Lemur, the first multi-touch screen OSC control surface. The use of touch-screen makes musical controllers customizable and reconfigurable devices that can fit different purposes or user preferences, even tough there is a lack in haptic feedback.

2005 – Sergi Jordà, Marcos Alonso, Martin Kaltenbrunner and Günter Geiger presents the Reactable (Jordà et al., 2005), the first complete tabletop tangible user interface for musical application. Positioning the tangible blocks on the table, and interfacing them with the visual display the performers control the modules of a virtual modular synthesizer. It also includes different types such as samplers, sequencers, triggers or tonalizers.

2005 – Dan Trueman and Perry Cook found the Princeton Laptop Orchestra (PLOrk) (Trueman et al., 2006), which has inspired the formation of other laptop orchestras across the world. They applied the traditional orchestra model to the modern musical technology. Each “laptopist” performs using a laptop with optional musical controller connected to hemispherical speakers that reproduced the way traditional acoustic instruments diffuse their sound in space. The conductor makes use of video and wireless network to augment his capability of direct and organize large ensembles. The Stanford Mobile Phone Orchestra (Wang et al., 2008) will follow it in 2008.

2008 – Hexler releases TouchOSC for iOS, an application that allows easy implementation of customized graphical touch control systems on tablets and mobile devices. The increasing computational power of general-purpose mobile device, their popularity, the interfacing versatility of the multi-touch screens, the possibility to exploit the internal sensors, and the large number of developer leaded to the appraisal of a variety of musical mobile musical application. This included the emulation of several classic electronic musical devices, application that replaced hardware based on custom touch screen such as the Lemur, or hybrid hardware extensions such as the AKAI MPC Fly 30.

2009 – Ableton and Cycling’74 release Max for Live, bringing to the popular music sequencer and DAW Live the patching capabilities of Max/MSP. This integration trend will continue with the 2011 release of Csound for Live.

2012 – Keith McMillen introduces the QuNeo, the first commercial touch controller, with OSC support, that features an array of pads supporting two-dimensional location and pressure as additional control dimensions.

In the same time span a variety of audio effects and signal processing techniques were developed, often in the context of radio broadcasting. These were introduced as a completing part of a DMI or as a separate unit to alter sounds.

5/17/2010, SF Music Tech, panel discussion on “The Future of Musical Instruments” with Roger, David Wessel, Max Mathews, John Chowning and Ge Wang

References

Adrien, J. M. 1991. “Physical Model Synthesis: The Missing Link.” In Representations of Musical Signals, edited by G. De Poli, A. Piccialli, and C. Roads, 269–97. MIT Press.

Bernardini, N., X. Serra, M. Leman, G. Widmer, and G. De Poli. 2007. A Roadmap for Sound and Music Computing. The S2S Consortium. http://smcnetwork.org/roadmap.

Cadoz, C., A. Luciani, and J. L. Florens. 1993. “CORDIS-ANIMA: A Modeling and Simulation System for Sound and Image Synthesis.” Computer Music Journal 17 (1): 19–29.

Cage, John. 1937. “The Future Of Music: Credo.”

Chowning, J. 1973. “The Synthesis of Complex Audio Spectra by Means of Frequency Modulation.” Journal of the Audio Engineering Society 21 (7).

Dictionary.com. 2013. “Electronic Music.” Dictionary.com Unabridged. Random House, Inc. Accessed December 18. https://www.dictionary.com/browse/electronic music.

Favreau, E., M. Fingerhut, O. Koechlin, P. Potacsek, M. Puckette, and R. Rowe. 1986. “Software Developments for the 4X Real-Time System.” In Proceedings of the 1986 International Computer Music Conference, 43–46. San Francisco, US.

Hiller, L., and P. Ruiz. 1971. “Synthesizing Musical Sounds by Solving the Wave Equation for Vibrating Objects: Part I.” Journal of the Audio Engineering Society 19 (6): 462—470.

Jordà, S., M. Kaltenbrunner, G. Geiger, and R. Bencina. 2005. “The reacTable*.” In Proceedings of the 2005 International Computer Music Conference. Barcelona, Spain.

Mathews, M. V., and N. Guttman. 1959. “Generation of Music by a Digital Computer.” In Proceedings of the 3rd International Congress on Acoustics. Amsterdam, Netherlands.

May, Elizabeth. 1983. Musics of Many Cultures: An Introduction. University of California Press.

Morrison, D., and J. M. Adrien. 1993. “MOSAIC: A Framework for Modal Synthesis.” Computer Music Journal 17 (1): 45–56.

Puckette, M. 1986. “Interprocess Communication and Timing in Real-Time Computer Music Performance.” In Proceedings of the 1986 International Computer Music Conference, 43–46. Den Haag, Netherlands.

Puckette, M. 1988. “The Patcher.” In Proceedings of the 1988 International Computer Music Conference, 420–29. Kologne, Germany.

Puckette, M. 1996. “Pure Data.” In Proceedings of the 1996 International Computer Music Conference, 224–27. Hong Kong, China.

Sachs, Curt. 1940. The History of Musical Instruments. Dover Publications.

Schwarz, D. 2000. “A System for Data-Driven Concatenative Sound Synthesis.” In Proceedings of the 3rd International Conference on Digital Audio Effects. Verona, Italy.

Schwarz, D. 2006. “Concatenative Sound Synthesis: The Early Years.” Journal of New Music Research 35 (1): 3–22.

Smith, J. O. 1992. “Physical Modeling Using Digital Waveguides.” Computer Music Journal 16 (4): 74–91.

Trueman, Daniel, P. R. Cook, Scott Smallwood, and Ge Wang. 2006. “PLOrk: The Princeton Laptop Orchestra, Year 1.” In Proceedings of the 2006 International Computer Music Conference, 443–50. New Orleans, US.

Von Hornbostel, E. M., and C. Sachs. 1914. “Hornbostel–Sachs.” In Zeitschrift Für Ethnologie, 46:553–90. Braunschweig, A. Limbach [etc.].

Wallin, N. L., B. Merker, and S. Brown. 2000. The Origins of Music. Cambridge: MIT Press.

Wang, Ge, Georg Essl, and Henri Penttinen. 2008. “Do Mobile Phones Dream of Electric Orchestras?” In Proceedings of the 2008 International Computer Music Conference. Belfast, North Ireland.

Wright, Matthew, and Adrian Freed. 1997. “Open Sound Control: A New Protocol for Communicating with Sound Synthesizers.” In Proceedings of the 1997 International Computer Music Conference. Thessaloniki, Greece.